12 Reasons Your CER Might Fail a Notified Body Review in 2026

And what you can do to fix them before audit day

“So often I see that the most preventable audit findings come from avoidable gaps: misaligned claims, drifting scope, and unclear update planning. If you tighten those now, you turn the CER from a one-off submission that will rear it’s head again in 12 – 24 months, into a controlled system you can maintain easily, and defend year after year.”

Alastair Selby

Managing Director, SciMed Consultancy Ltd

EXECUTIVE SUMMARY

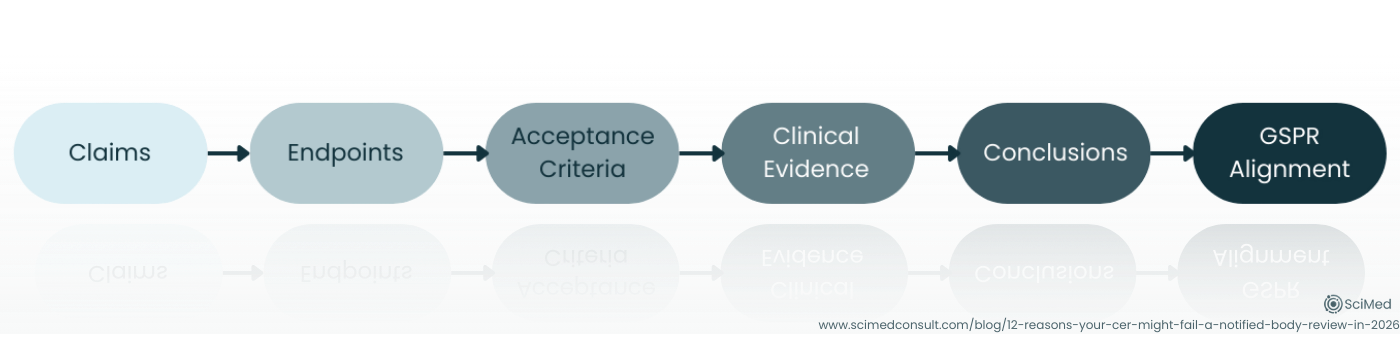

In 2026, CER success is less about “more evidence” and more about audit-ready logic, a clean, reviewable chain from claims → endpoints → acceptance criteria → conclusions → relevant GSPRs.

The fastest way to reduce audit anxiety is to eliminate document drift: ensure your CEP, CER, IFU and lifecycle updates all describe the same intended purpose, populations, device variants, and clinical narrative.

Notified Bodies increasingly reward manufacturers who define State-of-the-Art into measurable benchmarks: clear comparators, expected outcomes, and clinically meaningful thresholds that make benefit–risk decisions defensible.

A CER becomes resilient when its method is reproducible and transparent: literature searches that can be re-run, appraisals that clearly drive weighting, and risk management that is integrated, not simply referenced.

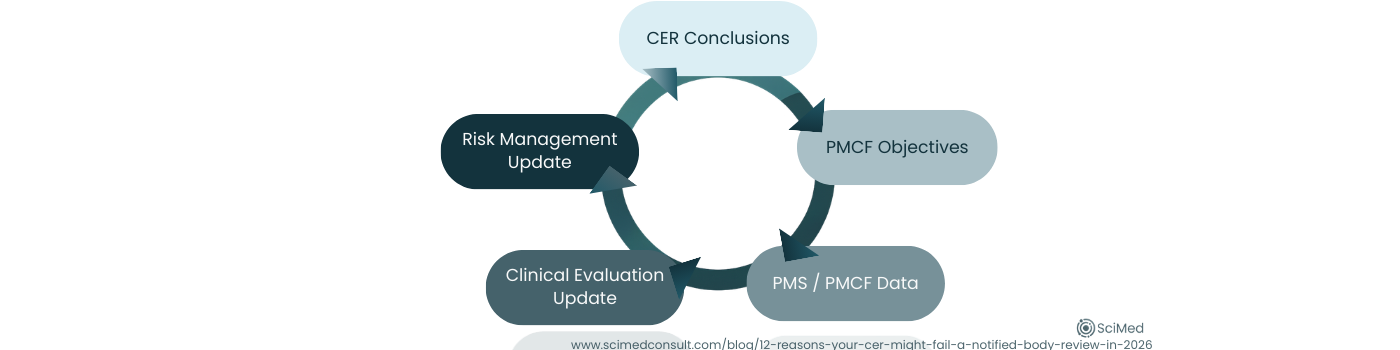

The hallmark of a mature MDR strategy is treating the Clinical Evaluation as a living maintenance loop: the CER conclusions inform PMS/PMCF objectives and outputs actively feedback into CER updates and Risk Management, and any evidence gaps come with a credible, resourced mitigation plan.

PRESSURE-TEST YOUR CER BEFORE YOUR NOTIFIED BODY DOES

If you want to assess how your CER would stand up to audit scrutiny, use our MDR Clinical Evaluation Audit-Readiness Checklist.

It translates the 12 failure points below into a structured self-assessment you can run before submission.

Figure 1. Audit-ready Clinical Evaluation Reports demonstrate a clear chain of reasoning from claims → endpoints → acceptance criteria → clinical evidence → conclusions → relevant GSPRs, allowing Notified Bodies to verify conformity efficiently.

INTRODUCTION

Notified Body audits; not a date in the calendar most manufacturers look forward to. Whilst when they go well they can be incredibly affirming that you are doing things right and can give you confidence in your processes, anxiety in the build-up to audits is incredibly common.

Whilst I understand the regulatory systems we have in MedTech are not perfect, I myself am an advocate for positivity in general within regulatory affairs, and never want to push negative messaging, or look to increase anxiety. But there is no question the burden ongoing updates place upon manufacturers, who often describe them as “resource-intense and confusing,” especially when requirements feel distributed across multiple references and interpreted differently by different reviewers. This has lead to the perception that audits themselves are a negative-but-necessary exchange, and this is fairly natural for regulatory professionals of all levels of experience.

The best perspective I give to our clients is that preparation is key, understand where your potential weak-points are, and use the tools that you have available. By far and away the biggest part of the European RA toolbox is the documented guidance we have. Be that from the MDCG, harmonised standards or content from Notified Bodies themselves, using these resources to consider how your auditor will be looking at your technical documentation is a useful exercise.

SCIMED’S MEDTECH HORIZON - PRACTICAL INSIGHTS FOR REGULATORY LEADERS

MedTech Horizon provides practical guidance on clinical evaluation, audit readiness, and evolving MDR expectations, based on what we see in real reviews.

INDUSTRY CONTEXT & BACKGROUND

We know that the Notified Bodies will always pay particular attention to Clinical Evaluation documentation, as their own designation is dependent on how they access these documents in particular (see MDR Article 45(1)), but the MDCG has very helpfully prepared a Clinical Evaluation Assessment Report (CEAR) Template (MDCG 2020-13).

An additional advantage we at SciMed have as consultants is that we are often called in to remediate after audits have not gone as well as our clients hoped. This perspective gives us an understanding and appreciation of common pitfalls manufacturers experience at audit, and we have used this experience alongside the content from MDCG 2020-13 to highlight twelve common issues we have either seen in 2025 or anticipate becoming more prevalent in 2026 during Clinical Evaluation Audits.

REASONS YOUR CER MIGHT FAIL A NOTIFIED BODY REVIEW

Reason 1: Your Claims Outpace Your Endpoints

A sophisticated CER can still stumble if the questions being asked and determined endpoints don’t directly test the claims being made; especially claims that are comparative (“better than”), broad (“reduces complications”), or user-dependent (“easy to use in routine practice”).

What reviewers look for is a clear chain: claim → endpoint → acceptance criteria → demonstration/conclusion, and then tie these back to any relevant GSPRs.

The opportunity this presents for you is to tighten your claims set until every claim has a piece of clearly identified supporting evidence.

Reason 2: The Clinical Evaluation Plan Doesn’t “Control” the Clinical Evaluation Report

Another similar issue that can arise is scope drift, which can often creep in quietly. This could look like a broader patient population than the plan accounts for, an extra indication, or a device variant implied but not evaluated. In review, these small drifts add up to a big question: what exactly did was clinically evaluated?

What we commonly see reviewers look for is consistency across the Clinical Evaluation Plans (CEPs), Clinical Evaluation Reports (CERs), IFUs and other technical documentation.

Pro Tip

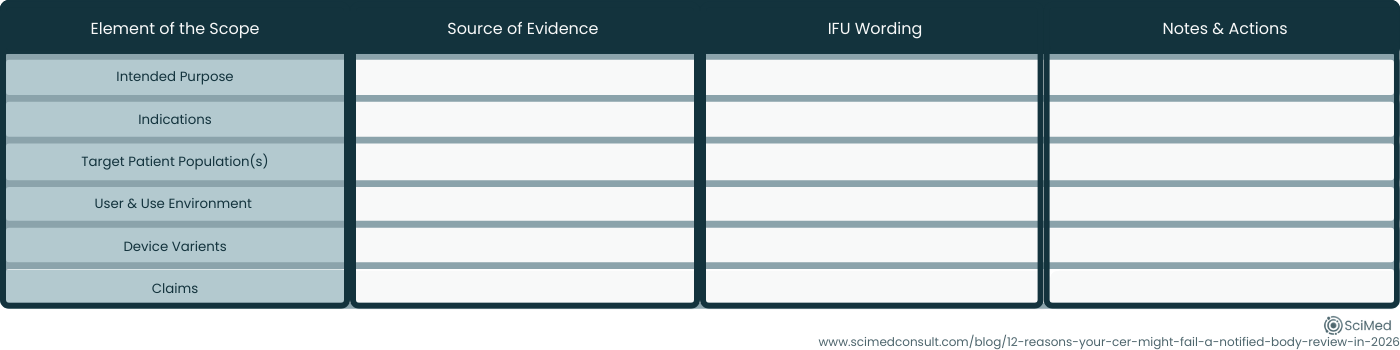

Before submission, do a one-page “scope lock” table internally (intended purpose, indications, populations, key variants, key claims) and cross-check that each cell has an associated clinical evidence source within the CER.

Figure 2. A simple internal “scope-lock” matrix helps ensure CEP, CER, and IFU statements remain aligned, preventing scope drift across intended purpose, patient population, device variants, and key claims.

Reason 3: State-of-the-Art is Described, but No Benchmarks are Defined

A background literature summary is not automatically a State-of-the-Art (SotA) demonstration. The missing step is translating the landscape as presented in the sources and literature into benchmarks that define what “acceptable” safety and performance looks like.

Notified Bodies like to see evidence that benefit–risk analyses and device performance & safety are judged against a clear clinical context, not against general statements.

This means manufacturers should look to benchmark logical comparators, expected outcomes, and clinically meaningful thresholds that are both commonly reported in the literature and match your intended purpose.

Reason 4: Your Literature Review isn’t Reproducible

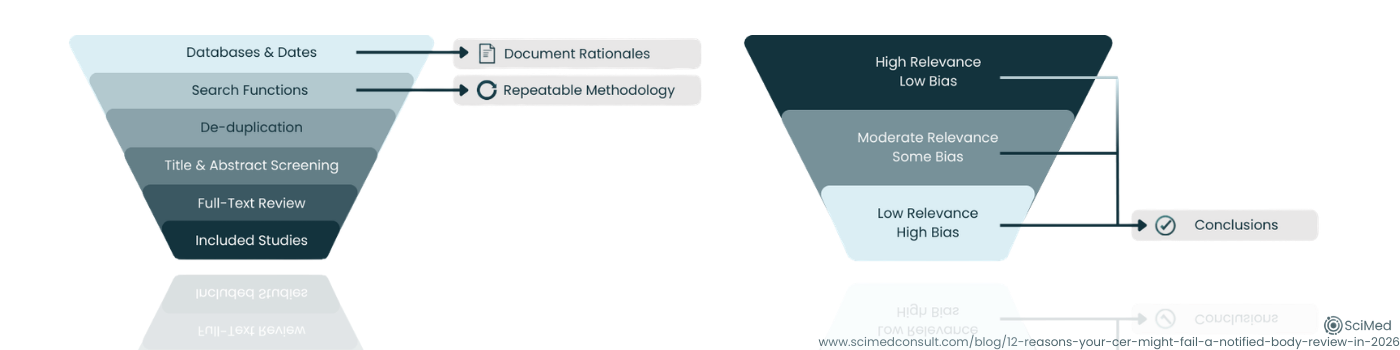

If your search strategy can’t be re-run and produce a similar evidence set, it’s hard for a reviewer to trust that the outcome is unbiased. This is one common complaint we see from clients, there is a real lack of clear “how-to” guidance which makes them feel in the dark when interpretations presented at audit can vary.

The constants that we see are clear articulation and justification of database selection, well documented search strings and search periods, clear inclusion/exclusion criteria, screening logic, and transparent handling of duplicates and full-text retrieval.

Always remember that the literature review should be a controlled, well-documented methodical process, not a narrative.

Reason 5: You Appraise Literature, But Don’t Show How You Weighted Them

A common pattern we’ve seen over the past 12 months is whilst a CER may include an appraisal checklist, the conclusions don’t clearly follow the appraisal. Reviewers then ask: “If you downgraded this evidence, why is it still driving the conclusion?” This is especially relevant if you have two data sources that are potentially contradictory.

We tackle this by being explicit with the weighting logic we use. How the quality and relevance scoring impact the influence of each dataset and how the scores from individual sources come together to draw meaningful conclusions that are representative of all the identified publications must be clear to the reviewer.

Figure 3. A reproducible literature review process identifies relevant studies systematically, while transparent evidence weighting ensures that higher-quality, more relevant data appropriately influence CER conclusions.

Reason 6: Equivalence is Used as a Shortcut Instead of a Controlled Argument

The changes relevant to equivalence published within the MDR do make leveraging equivalent data more challenging. And whilst the equivalent route is less likely to a viable route for manufacturers today than it used to be, the route is not completely closed down.

This is compounded by the fact that Notified Bodies are explicitly expected to assess the suitability of using equivalent-device data and document conclusions on equivalence and adequacy.

What these means in reality is that your clinical development strategy should be decided early, either:

Build equivalence with the level of access and granularity the MDR expects, assuming this is possible, or

Pivot to a planned evidence generation pathway.

Reason 7: Novelty and New Indications are not Isolated and Supported

MDCG 2020-13 is clear: if you claim some degree of change, innovation or new indications, such as a new patient population or use environment, reviewers expect to see evidence that also shows the safety and performance of the device under these new conditions.

The best way to ensure that this doesn’t become problematic at audit is to ring-fence novelty within lifecycle updates. Identify what is truly new, then ensure those elements have supporting evidence to justify the changes, which may involve a change of scope for your literature search methodology.

Reason 8: Risk Management is “Referenced,” Not Integrated

MDCG 2020-13 is clear; the interface between Clinical Evaluation documentation and the Risk Management process is pivotal, and the two processes should be truly integrated, not siloed. What this looks like in the real world is a proper contextualisation of the residual risks within the clinical evaluation, and the risk mitigations themselves are clinically credible.

Reason 9: PMCF and PMS are “Tagged On,” not a Continuation of Clinical Evidence Gathering of the CER

The CEAR structure explicitly checks PMS, PMCF and the plan for updating and maintaining the Clinical Evaluation in the future. If the Post-Market signals don’t feed back into the CER, or if CER conclusions don’t generate PMCF questions, your lifecycle logic looks weak. Address this by thinking about the Clinical Evaluation as part of a maintenance loop that poses questions to be asked, and then links to PMS & PMCF outputs, which themselves feedback into future CER updates.

Figure 4. Under MDR, clinical evaluation operates as a continuous lifecycle loop, where CER conclusions inform PMCF objectives, PMS data feeds updates, and outputs align with risk management and IFU communication.

Reason 10: Your Update Strategy Doesn’t Feel “Owned” or Trigger-Based

Manufacturers repeatedly describe the maintenance burden and “evergreen” clinical evidence management as incredibly resource-intensive.

In 2026, reviewers not only want to see a regularly-scheduled update, but are also reassured by specificity: what triggers an update outside of this schedule? Who owns it, and how quickly it happens. It can be advantageous to define triggers to show you are in control of the process, triggers such as:

New risks,

Changes to your processes,

New indications,

A change in cadence to PSUR signals, and

Complaint & vigilance trends.

Reason 11: The Intended Use Don’t Match the Clinical Narrative as Presented in the Clinical Evaluation

MDCG 2020-13 explicitly flags that IFU alignment (including intended purpose, proper use, warnings & precautions, and user training) must reflect the clinical evidence and risk profile. Are all aspects of the Intended Use substantiated with identified clinical evidence? It is always a good use of time to conduct a “communication coherence check”: if a clinician read only the IFUs, would they understand the same benefits, limitations, and key risks your CER concludes?

Reason 12: You Identify Gaps (or are Clearly Visible), but don’t Present a Credible Gap-Mitigation Pathway

A CER that concludes there are evidentiary gaps may be scientifically honest, but from the regulatory perspective (as well as commercially and operationally) it must be paired with a plan to avoid guaranteed issues. One strategic differentiator is providing a Gap Mitigation Plan via post-market pathways (PMCF survey, registry, RWD strategy, targeted study concept) rather than stopping at the diagnosis.

This is a critical step in the Clinical Evaluation and you might be surprised how often it is missed. To avoid this pitfall, tackle any gaps you have head one, plan the next steps: timeline, feasible methods, and how each activity maps back to the gaps, claims and key risks.

If you would value an independent second look at your claims-to-evidence traceability, equivalence justification, or PMCF integration, you can request a regulatory review here, focused, practical feedback designed to strengthen what you already have in place.

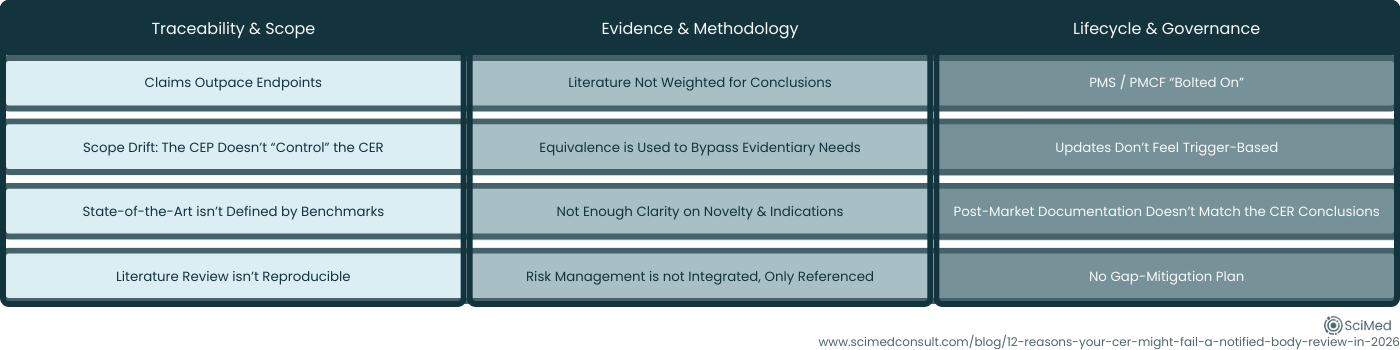

Figure 5. Twelve common CER audit findings grouped into three themes: Traceability & Scope, Evidence & Method, and Lifecycle Governance.

CONCLUSIONS

If you’re looking to better understand Clinical Evaluation requirements and expectations in 2026, or hoping to prevent CER audit findings, the takeaway is reassuringly practical: Most CER review failures are fixable when you treat Clinical Evaluation as a controlled, lifecycle system, and not a one-off report.

In 2026, preparedness means:

Keeping your logic traceable (claims → evidence → conclusions),

Keeping your documents coherent and aligned, and

Keeping your evidence identification and generation alive throughout the entire product lifecycle.

USEFUL REFERENCES

The MDCGs Clinical Evaluation Assessment Report Template (MDCG 2020-13) can be found here.

The EU MDR (Reg. (EU) 2017/745) can be found here.

The Draft Clinical Evaluation Standard (ISO/DIS 18969) can be found here.

The Legacy (but still widely used as a method reference) Clinical Evaluation Guide (MEDDEV 2.7/1 Rev. 4) can be found here.

STRENGTHEN YOUR CER BEFORE AUDIT

If you are preparing for MDR submission or want to pressure-test your CER against current Notified Body expectations, you can speak with our team for a focused review.